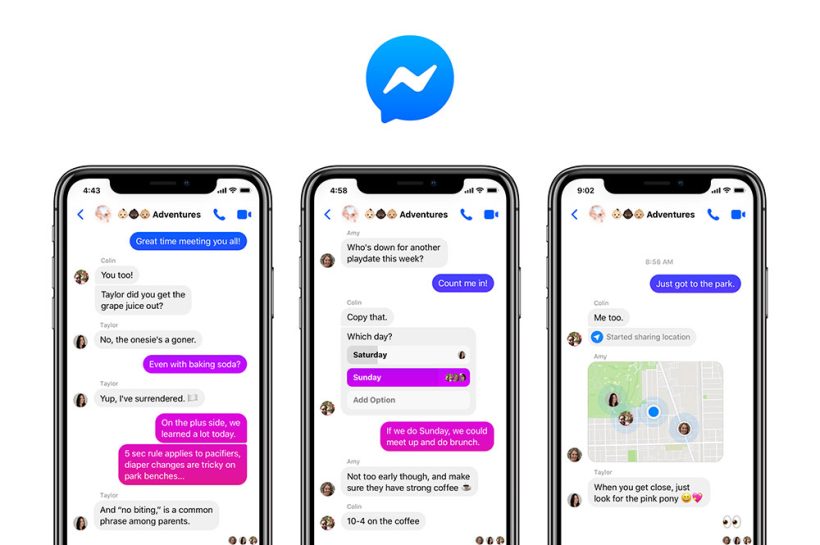

Fitur Terbaru Facebook Messenger, Main Game Sambil Video Call

Ada banyak aplikasi chat di luar sana, salah satu yang paling banyak digunakan adalah Messenger atau Facebook Messenger. Dengan layanan milik Facebook ini, pengguna bisa chat dengan sesama pengguna Facebook, dilengkapi dengan aneka fitur yang menarik. Menariknya, baru-baru ini ada fitur menarik di Messenger, memungkinkan pengguna bisa bermain game multiplayer, sambil video call. Bagi kamu…